Recently I attended a workshop organised by the Open NFP organisation about Data Plane acceleration. The primary goal of the workshop was to get students and researchers (why was I there you may think) familiar with the P4 programming language.

P4 is a programming language created to simplify writing data planes for networking use cases. Recently the P4-16 spec was released and could be considered a mature version of the language.

Now I’m not a hardcore developer. I know my way around in Python, GoLang and C#, but I never wrote anything more low level like C. P4 is created a little bit like GoLang, where I do not mean it as comparison, but as an architecture. P4 is designed so you only need to focus on the actual networking features that you want to make available on the hardware you are programming it for. Then when you compile it, it will generate runtime code for your hardware. Or as the creators explain it:

At one level, P4 is just a simple language for declaring how packets are to be processed. At another level, P4 turns network system design on its head.

There is no need to worry on low level memory management or other things that would slow down your development for that specific feature that nobody has in their platforms.

Now this is a major advantage. Full freedom in writing anything you’d like right? The downside is that very little (basic) functionality is not present when you start pushing code to a piece of hardware. You really have to do everything yourself. Which I think is underestimated. P4 does help you in giving you some standard functionality in their spec that vendors should implement when they offer P4 capabilities, like basic switching or load balancing (ECMP) functionality.

Who supports it?

P4 is relatively new and therefore hardware support is not very common. I was able to find 2 products to support the full P4 spec at this moment.

Barefoot Tofino chip

Barefoot is the inventor of the P4 language and they have created a very fast ASIC like chipset. The chipset does not use a proprietary SDK like Broadcom, Cavium or silicon from large network vendors, but is fully open due to the support of P4.

They are partnering with white box vendors to create switches (32 port 100GBE) that are currently shipping.

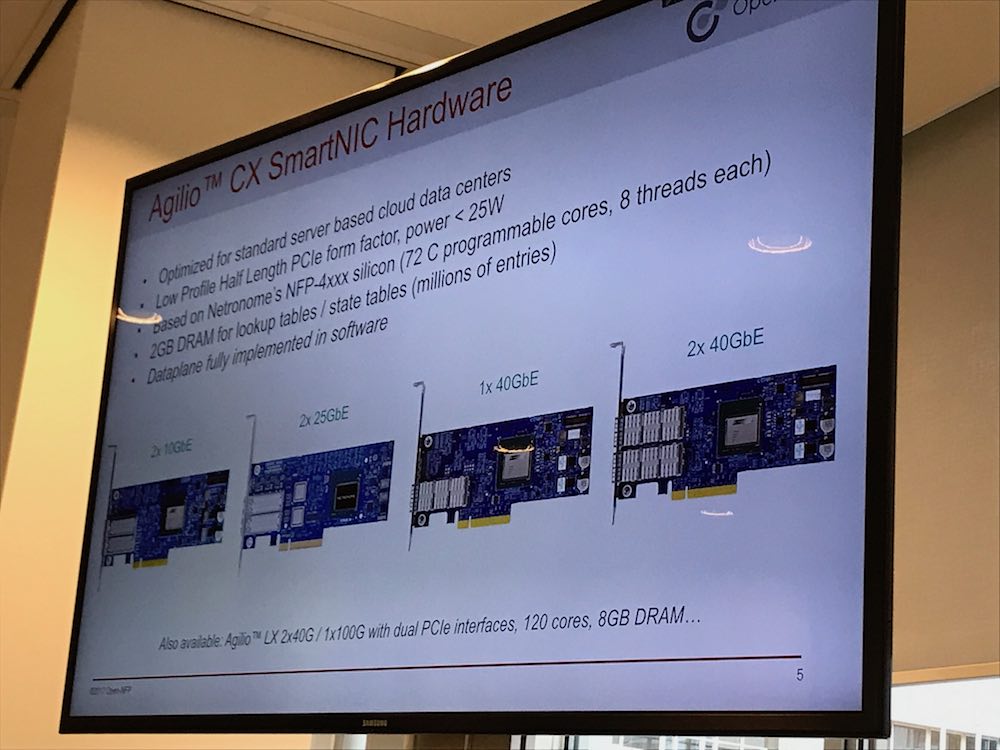

Netronome Agilio SmartNIC

Netronome was one of the organizers of the workshop I attended and they have a shipping product. Their SmartNIC products are standard PCIe NIC’s with various connections that support offloading data plane functionality to the NIC rather than letting applications or the kernel spend a lot of CPU on it.

These products are not your typical switch or NIC, they basically do not have a default feature set available to use. The Barefoot switch for example, does not come with an operating system (NOS) and the NIC always needs to be programmed before any functionality works.

What about OpenFlow?

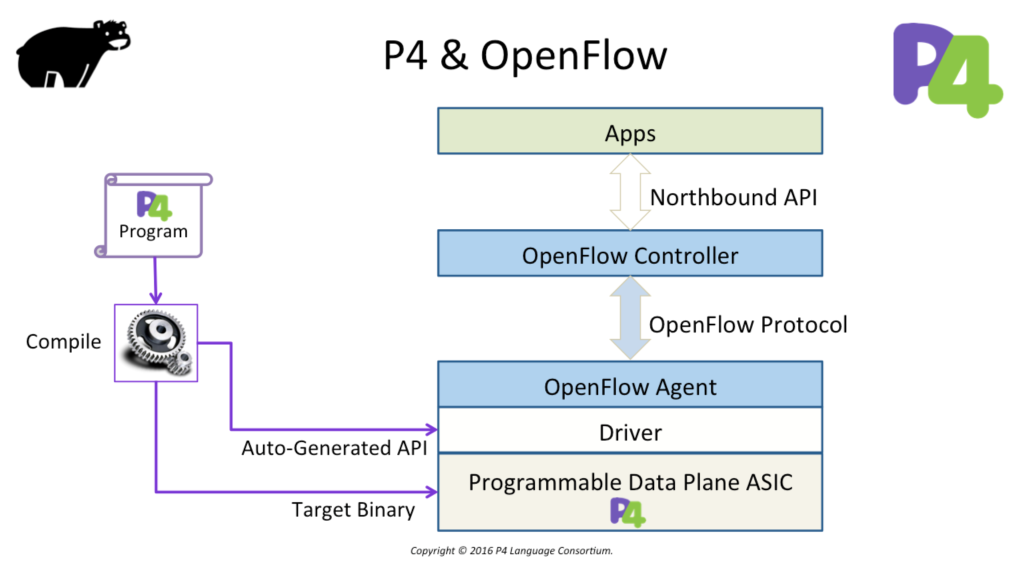

The most typically asked question would be: Is P4 the successor of OpenFlow? The answer to that is really simple: No! In some cases P4 may even use OpenFlow to program it’s forwarding table(s). Diagram courtesy of P4.org:

The P4 language is designed to program your networking hardware (or even software components). P4 does not contain a control-plane protocol to learn about forwarding table entries (that could be learned through a traditional or home grown routing protocol). In other words: you have to write your own forwarding table programming protocol/application or use an existing one (like OpenFlow).

What about existing vendors?

There are rumours that major networking vendors (Cisco, Juniper, Nokia, etc.) could start supporting (parts of) P4 on their own silicon or implement the Barefoot Tofino chip in products, but no announcements have been made at time of this writing. Vendors are starting to open up their operating systems via programmability features (see Juniper’s Extension Toolkit JET for example), but this does not allow to directly program new features into the hardware (which is programmable in some cases like Juniper MX).

Summary

P4 will open up a lot of features that are currently unknown or don’t exist. It allows for a fully open and programmable data-plane. The use case for this technology would, as always, be the web-scale companies, who want to free up as much CPU resources to perform other tasks and leave the networking hardware to perform all networking functions.

For lower scale deployments, my opinion would be that, tools like DPDK, XDP or eBPF would already free up sufficient CPU resources and optimise user space or kernel networking enough for most people.

For more information on all the mentioned acronyms and tools, click the links in this blog.

One noticeable fun fact was that during the workshop I attended, I was able to spot one person on a Mac, two corporate Windows machines and all others running Linux 🙂