Recently I moved to a new house and as a lot of reconstruction was done to bring the house up to date. I took the opportunity to have something I’ve always wanted in my home: a server rack! In my previous lab set-ups they were either located in my employers lab location or placed in a storage space. Now I had the opportunity to really make something nice for myself.

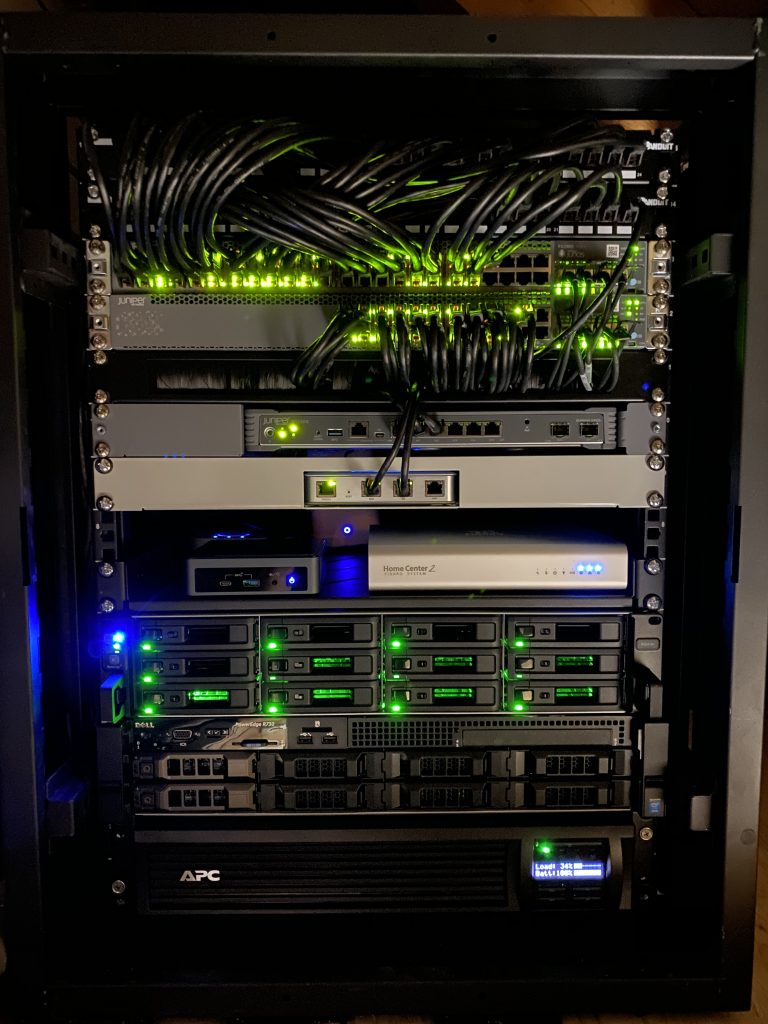

The rack contains both the equipment for running the automation and infrastructure in the house and my home lab. In this post I’d like to show some details of the equipment in it and the basic infrastructure that is running on it.

Location

On top of my garage is an attic that exactly fits a 15RU rack. As my home office is built right below it I had to take care of sound levels, so no high-rpm 40mm fans.

After taking away the top and bottom of the rack it could fit exactly! All Cat.6 cabling is terminating on Panduit patch panels and all wall outlets are nicely numbered. I can say that if a device has a UTP port, it is connected! I had the luck that we were doing a complete overhaul of the wiring in the house, so I could put UTP wall outlets anywhere I wanted to. Overall there are 41 Cat.6 UTP connections in the walls and outside on the house.

I’m actually quite proud of the result!

Patch panels

To connect all house wiring I use 2 Panduit 24 port keystone panels to house all the cables. Cabling used is standard Cat. 6. In total about 1200 meters of UTP cabling went into the house!

Keystone connectors: Panduit CJ688TGBL

Panels: Panduit CP24BLY

Switching

The switching infrastructure of course had to be based on Juniper EX switches. I did look at a Ubiquity Unifi Switch Gen 2, but didn’t chose it because the amount of PoE ports on the non-Pro models are a bit low (16) and I already needed 14 PoE ports, so with the Unifi only having 16 PoE ports, that didn’t really give room to grow. To get a full PoE model I had to go for the Pro version which is rather expensive.

Since I already owned a Juniper EX2300-24T from a project a couple years back it was relatively easy to scout eBay for a good price and get a PoE version. Which would allow me to combine the switches in a Virtual Chassis. Eventually I even found a barely used Juniper EX2300-48P for a decent price. With the 48-port model I could wire up all ports in the house to the switch and even support PoE on all those ports. Which already came in very useful when I was testing some access points in the living room and could just connect to any outlet! Wiring up all the ports also gave me the option to use the outlet number as switchport number, so I can always easily remember which port goes to which outlet. (Which also explains why the cabling run from the patch panel onto the switch may look a bit odd at first).

To configure the EX2300 switches in Virtual Chassis, you have to use the on-board 10Gbps SFP+ ports. I picked up a couple of SFP+ DAC cables to connect the switches back-to-back and also connect some other SFP+ ports that I have in the rack.

After powering up the EX2300-48P for the first time I noticed some whining of the fans that I could hear from below it in the office. I bought a couple of Noctua NF-A4x20 PWM fans to replace the default fans with. This Reddit post has good instructions on how to do the wiring on the Noctua’s as the coloring is a bit different from the default ones. After swapping them out, temperatures are pretty much the same and fan noise is completely gone!

Switching: Juniper EX2300-48P and EX2300-24T in Virtual Chassis

Fans: Noctua NF-A4x20

Internet / Routing

The routing part of the network is still under construction as I purchased a Juniper SRX300 to be the WAN router handling the Internet traffic and connecting the public IP subnet to the Internet like I explained in my previous post.

As Internet connection I currently have a KPN Bonded-DSL connection getting me speeds up to 185Mbps down and 63Mbps up. Unfortunately the area I moved to does not have any fiber-to-the-home and as it’s a super small village, I don’t expect it coming here anytime soon. Recently the cable provider Ziggo upgraded the network to support 1Gbps down and 50Mbps up, but I have to admit the 185Mbps down is more than enough for 99% of the time and I do like the pretty-much static IPv4 address and native IPv6 they provide (which Ziggo doesn’t support in my area at this time).

As the Juniper SRX300 does not have any PIM slots, there is no way I can connect the DSL signal natively to the SRX. I could have gone for a SRX320 that does support additional slots, but unfortunately the DSL PIM does not support DSL bonding which I needed to run my connection at full speed. Therefore I opted to pick up a FritzBox 7581 DSL modem that I configured in full-bridge mode. This means that the modem basically translates the DSL to Ethernet and does not set-up any connection by itself. This ensures I can set-up a PPPoE session on my SRX300 and terminate the IPv4 and IPv6 addresses natively on the SRX, without having to go through some double-NAT set-up.

Now since KPN is also my TV provider I receive the live TV signal over multicast in another VLAN. I have not taken the time to set this up on the SRX as the signal is received with a TTL of 1, so running PIM on the SRX is not an option and IGMP Proxy is not supported, there is an alternative that could work, but I still have to test that out (and request a maintenance window, as the network is in production ;).

Because I have not figured out the multicast set-up on the SRX yet, I’m using the old router I used in my previous home set-up, which is the Ubiquiti USG. It performs quite well and after figuring out the PPPoE, Multicast and IPv6 set-up it’s very stable. I picked up a rackmount for it, so it looks nice in the rack! I’ll be sure to cover the configuration of the SRX when it’s finished, as I haven’t been able to find running a KPN IPTV set-up over a Juniper SRX yet. For the USG I used some parts of this configuration.

Modem (bridged): FritzBox 7581

Router and Firewall: Juniper SRX300 / Ubiquiti USG

Wireless

For wireless connectivity I’m using the same set-up I’ve been using for a few years now. I really enjoy working with the Ubiquiti Unifi line of products and they have proven to be really stable and performing well.

In the livingroom I have placed one Unifi UAP-AC-HD centrally in the room. I initially planned on adding one on the second floor (first floor for Europeans 😉 ), but the AP has a great range so even in bed I still get full performance from the access point.

In my office I needed a second UAP-AC-HD, as it is too far away from the livingroom and I wanted to have full Wi-Fi coverage and speed when I’m working.

The third and fourth access points are Unifi AP-AC-PRO, they are covering my front- and backyard and are placed outside on the house. They are water resistant, although they are quite out in the open now, so I have to wait and see how long they last in full rain and winter season.

Wireless: 2x Unifi UAP-AC-HD and 2x Unifi AP-AC-PRO

Home Automation

For automating lights and heating in the house I use a number of appliances. I won’t focus too much on the details here as that would be enough for a separte post. I use a combination of systems and I’m still working on combining everything together in Home Assistant at some point.

Currently I use a number of Philips Hue lights, so a Hue Bridge v2 is connected to the network.

Next I use quite some Z-Wave based appliances, like dimmers, switches and heating equipment. To control that I currently use a Fibaro HomeCenter 2. As more devices are WiFi based, I may move all Z-wave appliances over to Home Assistant at some point, but the Fibaro solution has served me well for a number of years. Fortunately the antenna is strong enough that it can reach throughout the entire house from the server room. Recently Fibaro released HomeCenter 3 which has some advantages over my version, but not enough to justify an upgrade.

Next I use a Eufy Video Doorbell. I used to have an original Ring, right after they rebranded from DoorBot, but that got broken during the move and I really liked the aspect of Eufy having a local storage solution connected to the network, rather than paying a fee for a cloud recording option for Ring. I’m happy I made the switch and really like th quality of the Eufy!

Finally I monitor the power usage in my house by connecting to the P1 port on my ‘Smart meter’. Fortunately the Dutch government mandated an open interface on every smart meter installed in houses. I use the great project DSMR Reader to monitor power usage over time running on an Upboard that I had laying around.

Home automation:

- Fibaro HomeCenter 2

- Philips Hue Bridge v2

- Eufy Video Doorbell with base station

- Upboard connected to P1 smart meter

UPS

Since I’m running critical infrastructure in this house since working from home, I needed something to keep my Internet connection up and running all the time. Especially since the power grid in the village I live in now is not as good as my previous houses, there are slight glitches from time to time, enough to reboot equipment, so I opted to get a decent UPS. I tried to get one not too big for the environment as I don’t need a 2 hour battery time, but I’d like to be able to survive a dip in power for at least an hour. I opted to get an APC UPS of 1000VA capacity. The version I chose has a very simple integration on the network called APC Smart Connect, as I didn’t want to pay for the way overpriced network module that APC sells in their more enterprise focused products. The SmartConnect tools simply e-mails me when something happens to the UPS, like when it kicked in the battery power or when it needs a software upgrade. More than enough for my use case at home!

As my DSL modem is not in the server rack, but close to where the phone line is entering the house, I also use a small APC UPS to ensure my modem stays online as well.

UPS: APC SMT1000RMI2UC

NAS

I’ve been a big fan of Synology products for many years, I have owned a Synology NAS since 2007 and have gone through a few models. As this is my first ever home rack, I could finally upgrade to a RackStation! Now as Synology is releasing new products throughout the year it’s always hard to figure out if the model you picked is going to be replaced by the next upgrade cycle, but it is what it is.

I was using a Synology DS1518+, which is quite a recent model and I’m using this now as a backup NAS in a remote location. I upgraded to a model that was released in the same year. As I wanted some room to grow and expand capacity without replacing all my disks I wanted more slots in the most efficient rackspace.

The model I went for is the Synology RS2418+ which has a quad core Intel Atom C3538 (not vulnerable to the issue with C2k series) and DDR4 memory. I went for 16GB, as that fulfils more than I need on it as I’m not looking for the NAS to become a full server. I want it to host files, have blazing fast file transfers and maybe run a few containers. The RackStation is released in the same year as the NAS I’m upgrading from, but the CPU is quite a bit faster plus it is using DDR4.

I moved the disks from my old NAS over and upgraded the volumes a bit by adding disks. I currently run the following volumes:

- 5 x 8TB WD Red in Synology Hybrid Raid 1 with BTRFS with a usable capacity of 28TB as main data volume

- 4 x 480GB Intel SSD DC S3520 in RAID 0 with BTRFS with a usable capacity of 1.7TB as volume to store Virtual Machines accessible via NFS

With 9 slots filled, this leaves me with 3 empty slots for future capacity. My main storage volume is about 40% filled so that should be fine for quite some time!

For hosting virtual machines I use a RAID 0 volume as I just want fast SSD based storage and I don’t really care about high availability, because all VMs are backed up on the main data volume, so in case of an SSD failure a restore is easily done.

NAS: Synology RS2418+

Disks: WD Red 8TB (watch out for SMR disks at lower capacities, as they can give you a bad performance in a NAS setup)

SSD: Intel 480GB (look for $60-90 ones on eBay)

Server 1: NUC

The first server that I run 24×7 is an Intel NUC10i7FNK with 64GB RAM and no storage. The NUC is a perfect form factor to host just a few virtual machines with a modest power consumption. I run VMware ESXi 7 on the NUC. Please read the blogs on installing ESXi on this NUC carefully as you will run into issues otherwise.

ESXi has the SSD volume of the NAS mounted via NFS. The virtual machines and containers running on this server (yes I run Docker in a VM) are meant to stay online all the time. This basically means that it runs services that are used in the house like monitoring my house power usage, Adguard Home, Home Assistant and NetBox.

Server 1: Intel NUC10i7FNK, 64GB RAM

Server 2: Dell R730

The real powerhouse of the home network is my Dell R730. I wanted to have a beefy server to run virtual network topologies and other experiments on. The server is also not meant to run 24×7 so I don’t really mind power usage too much as the system only runs a few hours a day when needed. I did take a look at hosted options in various cloud offerings, but when renting a decent performant server for a number of months I could also scout eBay and the Dutch classifieds version: marktplaats.nl.

I have been using a Dell R610 for many years with 8 Nehalem cores and 48GB memory, but as I was building out topologies with a number of vMX and vQFX devices it was taking far too long to get everything booted up and as that system was getting 10 years old. I figured it was time to get it replaced.

After looking for various options, I knew I wanted a high core count (because those virtual network appliances consume a lot of CPU power) and a decent amount of memory. Preferably a recent CPU architecture as modern cores are so much more performant than older and more power hungry CPU’s. Which is why I narrowed it down to at least having DDR4 memory, as that brings the selection down to more recent models of servers. Unfortunately these are considerably more expensive than older CPU’s with DDR3 memory.

After searching for quite some time I stumbled upon a guy offering 2 Dell R730 servers with 256GB DDR4 memory each! Unfortunately only 2 CPU’s came with the servers so I guess one server was bought as spare unit or they disappeared for another reason. I agreed on a very good price for both of the servers. The memory itself could have gone for almost the price I paid in total. So because the deal was so good I opted to buy both and get 2 CPU’s separately to drive the second server.

One of the servers is now in a colocation hosting a number of servers like a Unifi Controller (I host all Unifi setups in my family) and some other services I host. This server came with 2 Intel Xeon E5-2670v3 12-core CPU’s and 256GB of memory and I’m very happy with it!

The server I run at home I had to buy new CPU’s for as it came with empty sockets. As the 8 core machine I had was very underperforming I wanted to get the maximum core count I could get, so I ultimately found a compatible pair of Intel Xeon E5-2699v3 18-core CPU’s giving me a total of 36 cores and 72 threads to work with. Now after a couple of months using them, I understand this is way overkill for a home lab, as I haven’t been able to stress it beyond the 40% CPU with quite decent lab topologies. Unfortunately 1 memory stick is broken, but I don’t need that much memory anyway. I could have easily settled for 96/128GB as well.

The version of the chassis I have is using 3,5″ drive bays without any disks, so I picked up some more Intel 480GB SSDs that I also run in my NAS and some disk brackets with 2,5″ adapters as I wanted to install Ubuntu and EVE-NG bare metal on this system. The system even has a 10Gbps NIC on-board, so the server is connected with 10GE using DAC cables to my EX2300 VC, which nicely fills up a few 10GE ports on the switch!

As the power usage of the Dell server is much higher and I don’t need it to run all the time I wanted to have the server off when I’m not using it. Unfortunately I’m locked out of the iDRAC console by a password that the previous owner also doesn’t know and since the iDRAC module on this particular server comes embedded on the motherboard I would need to replace the motherboard to get access again. That’s why I opted to use a much simpler solution/workaround for turning the server on and off remotely. I enabled Wake on LAN on the port I use to manage the system and with a very simple command I can wake the server remotely from another system in the network. This works quite well and saves me a lot of power usage in a year!

Server 2: Dell R730, Dual Intel Xeon E5-2699v3 18-core CPU, 256GB DDR4 RAM, 2x 480GB Intel SSD in RAID-1 to run the OS

OS: Ubuntu with EVE-NG Pro

EVE-NG

As mentioned I run EVE-NG Pro bare-metal on the Dell R730 server. I really enjoy working with EVE-NG as it’s so easy to use and offers everything I need to to quickly spin up a virtual lab and can drag and drop connections to any virtual appliance I want. Of course the main appliances I run are:

Then to have some hosts connected to the networks, I’m using a simple container that has a number of network tools pre-installed. Using a startup-config these are given IP addresses.

I can highly recommend using EVE-NG for any of your network virtualization topology needs as I’ve thrown together rather complex topologies during calls with a customer and could go in demonstrating something very easily!

The Pro version is not really necessary, as the free version already comes with most of the features, but it does give you some useful tools like support for Docker containers and adding/removing connections while the appliances are running. Additionally you support a great product for a minimal yearly fee!

Conclusion

This all seems a bit overkill for running in your own house and especially since most of this could easily run in the cloud, but as an engineer I just love setting this stuff up and I enjoy it every day. I truly enjoy having this in my house and I’m actually kind of proud of it!

If you have any questions or thoughts or want to share your own home network rack, don’t hesitate to leave a reply in the comments or reach out on Twitter!

Move to MIST to wireless, Rick 😉 My racks looked like this as well when I first moved into my house newly constructed 2005. After 15 years of technology changes, two massive close lightning strikes, DSL to ADSL to V.DSL to fiber things do not look so neat anymore by a far stretch. APC is best UPS up to any reasonable size I found of course and I used to run mutliple servers until I realized that e’thing in house pretty much fits onto synology @ much lower power/complexity. All the complex stuff is on my Linux workstation anyway.

Good suggestion. I might move to Mist in the near future, but I have to test the AP’s first. Mounting them on the ceiling is a bit difficult as they are reasonably big 😉

It’s true, everything could fit on a decent workstation, but there is no fun in that! As I’m not much hands-on anymore in my job, I need to tinker around with stuff at home.

Next up is testing a decent RIFT topology on the servers 😉

Hi Rick, just came across your site and this article. Great description of all your components! Sounds like a great setup, thanks for sharing.

Thank you!